Go Native

Identifying and fixing usability issues for online bookingsGo Native is a company providing serviced apartments across the UK, as well as in a growing number of European and US cities. People can book apartments through their website.

The Challenge

Improve the online booking process and conversion funnel for Go Native customers.

The Process

The Whys

Stakeholders were very concerned as to why 50% of users were bouncing from the homepage, and why a large proportion of users were not converting through the funnel, despite having selected the dates and preferred apartment for their upcoming stay. Why was this happening?

Further Questions...

We only had Google Analytics relaying this information back to us and no further insight into why this was happening. Therefore, I decided to assess the current homepage, review the booking process, and identify what could be putting users off:

- Were there enough call-to-action (CTA) buttons?

- Were users able to search effectively?

- Was there enough information to make the user want to explore the site further?

Familiarising myself with Go Native

I had never used Go Native as a customer, so the first thing I did was evaluate the site and put myself in the shoes of a user. I looked at 4 key user journeys to book an apartment and went through the processes as a customer, rather than as a UX expert.

Throughout the journeys, I found the system to be very slow to update me on statuses, and when searching for an apartment, my search results were inaccurate. The design itself seemed quite dated, and felt like it was trying to compete with another well-known and successful competitor, without getting the overall experience quite right.

Little to No Research Budget

I needed the answers to the whys, and I was certain that this could be achieved by carrying out some qualitative research.

In an ideal world, I would have recruited 5-6 Go Native customers and given them a booking task to complete. This would have allowed me to watch them navigate through the site. However, without little budget for this sort of activity, I had to be creative with my approach to research.

Heuristic Evaluation

As I'd been through the site as a customer, I had worked hard to not let my expertise in UX cloud my findings. However, as there were several usability and accessibility issues with the site, it seemed careless of me to not note these. So, I decided to conduct an Heuristic Evaluation in order to gauge the level of usability.

When carrying out an Heuristic Evaluation, not only do I like to draw upon a number of principles by Nielsen (and other practitioners), but also on principles that take a number of factors into consideration that could impact the experience. For example, the enjoyability of the product. I used a slightly modified version of Dr David Travis's 247 Web Usability Guidelines as a basis for my Heuristic Evaluation.

Guerrilla Research

After conducting an Heuristic Evaluation, I still felt it was important to test the site with some users and to observe them. Even with my years of experience in the field, I could still have missed some usability problems. Plus, we did not have the budget or resources to hire further UX Experts to help.

I managed to find 6 users (all non-UX agency staff), and I set them the following tasks:

- Find an apartment in a specific area and book for a specific month

- Change/edit your booking

I used the think-aloud protocol to document my findings.

Findings and Recommendations

Once the testing sessions were complete, I reviewed the findings and ensured that they were presented back to my Stakeholders in a clear, digestible report. I also made sure that I encouraged feedback from the business.

At this stage, I proposed two changes:

- Create a streamlined user journey to see if customers could quickly and easily book an apartment

- Create several variations of the homepage for further testing

Homepage Wireframes

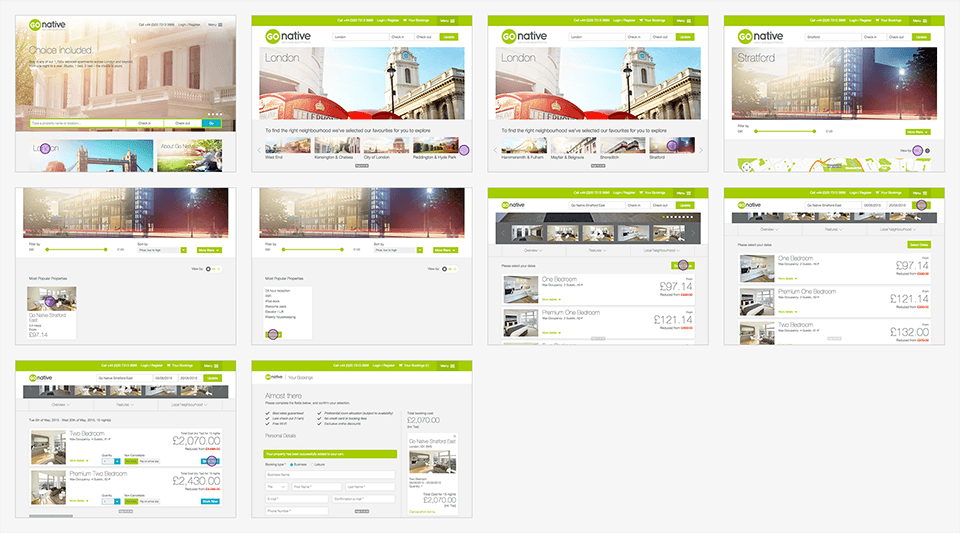

After creating the new booking flow, I came up with 3 wireframe versions of the homepage, which would be multi-variate tested (MVT).

As mentioned earlier we didn't have access to customers, so couldn't easily understand why 50% of them were leaving the homepage. However, from my own observations (including Guerrilla Research insights), I came up with some options for a first round of internal testing.

The Outcome

The new booking flow was implemented by the developers, and we saw a 5% increase of completed bookings.

The homepage wireframes went through several rounds of internal testing and the 3rd design won.